It will be using the default unoptimized PyTorch path.

Install and Run Automatic1111 Stable Diffusion WebUIįollowing the instructions here, install Automatic1111 Stable Diffusion WebUI without the optimized model.

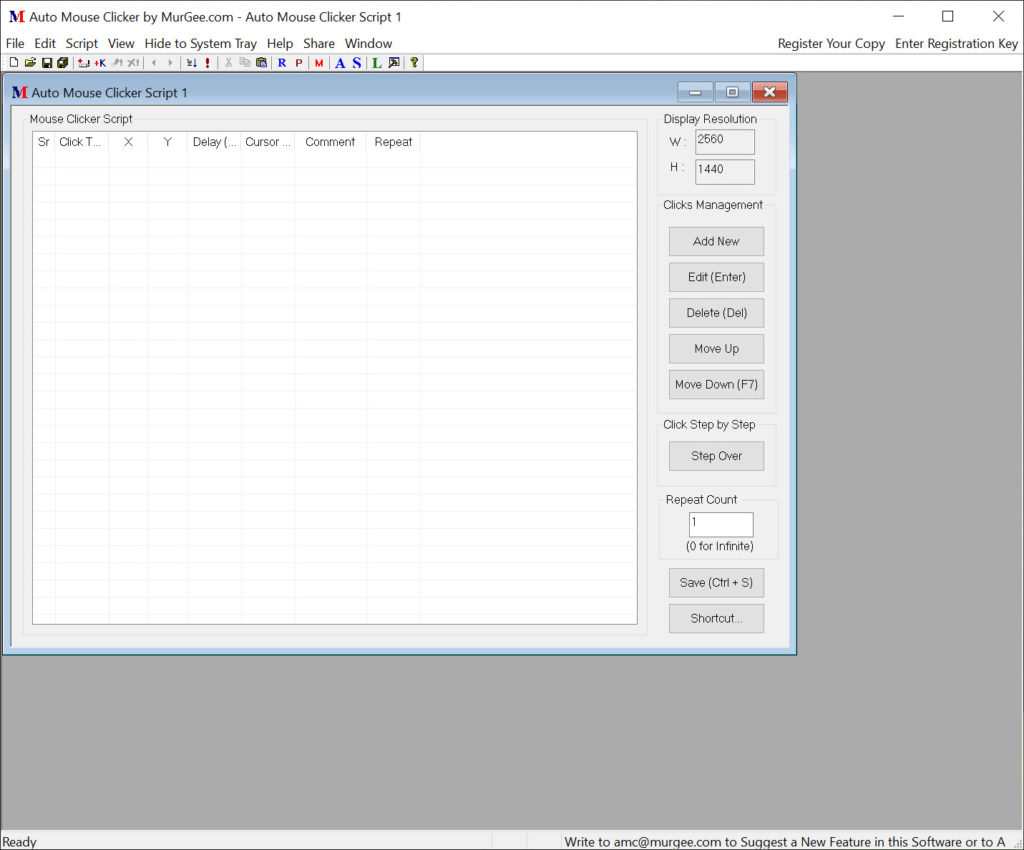

python stable_diffusion.py -interactive -num_images 2Ĥ.To test the optimized model, run the following command:.Use the following command to see what other models are supported: python stable_diffusion.py –help The o ptimized model will be stored at the following directory, keep this open for later: olive\examples\directml\stable_diffusion\models\optimized\runwayml. The model folder will be called “stable-diffusion-v1-5”. Generate an ONNX model and optimize it for run-time.cd olive\examples\directml\stable_diffusion.Important to note that Python 3.9 is required. Create a new environment by sequentially entering the following commands into the terminal, followed by the enter key.(Following the instruction from Olive, we can generate optimized Stable Diffusion model using Olive) Generate Optimized Stable Diffusion Models using Microsoft Olive Quantization: converts most layers from FP32 to FP16 to reduce the model's GPU memory footprint and improve performance.Ĭombined, the above optimizations enable DirectML to leverage AMD GPUs for greatly improved performance when performing inference with transformer models like Stable Diffusion.ģ.Transformer graph optimization: fuses subgraphs into multi-head attention operators and eliminating inefficient from conversion.Model conversion: translates the base models from PyTorch to ONNX.The DirectML sample for Stable Diffusion applies the following techniques: Olive greatly simplifies model processing by providing a single toolchain to compose optimization techniques, which is especially important with more complex models like Stable Diffusion that are sensitive to the ordering of optimization techniques. Microsoft Olive is a Python tool that can be used to convert, optimize, quantize, and auto-tune models for optimal inference performance with ONNX Runtime execution providers like DirectML. Driver: AMD Software: Adrenalin Edition™ 23.7.2 or newer ( ).Platform having AMD Graphics Processing Units (GPU).Ensure Anaconda/Miniconda directory is added to PATH.Installed Anaconda/Miniconda ( Miniconda for Windows).Prepared by Hisham Chowdhury (AMD), Lucas Neves (AMD), and Justin Stoecker (Microsoft)ĭid you know you can enable Stable Diffusion with Microsoft Olive under Automatic1111(Xformer) to get a significant speedup via Microsoft DirectML on Windows? Microsoft and AMD have been working together to optimize the Olive path on AMD hardware, accelerated via the Microsoft DirectML platform API and the AMD User Mode Driver’s ML (Machine Learning) layer for DirectML allowing users access to the power of the AMD GPU’s AI (Artificial Intelligence) capabilities.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed